What AI Can and Cannot Do in 2026 (And How to Use It Without Regrets)

A friend asked an AI tool a simple question last week, “What year did this company launch?” The answer came back fast, formatted nicely, and totally wrong. Confident wrong is still wrong, and that’s the part that trips people up.

Here’s the bottom line: AI is great at pattern-based work, fast drafts, and sorting messy info. At the same time, it doesn’t “know” things the way a person does. It predicts what text should come next based on data it has seen before.

This guide lays out what AI can do well, what it can’t do reliably (even now, in 2026), and how to make safe calls in real life and business. You’ll also get a simple checklist you can use today, whether you’re writing blog content, running a team, or building an affiliate site on nights and weekends.

What AI Can Do Really Well (And Why It Feels So Impressive)

AI shines when the task looks like this: you give it inputs, it spots patterns, and it produces a “good enough” output you can review. Speed matters here, because AI can do in minutes what might take you an hour.

That doesn’t mean it’s “smart” in a human way. It means it’s useful. Think of it like a power tool. A nail gun can build faster than a hammer, but you still choose where the nails go.

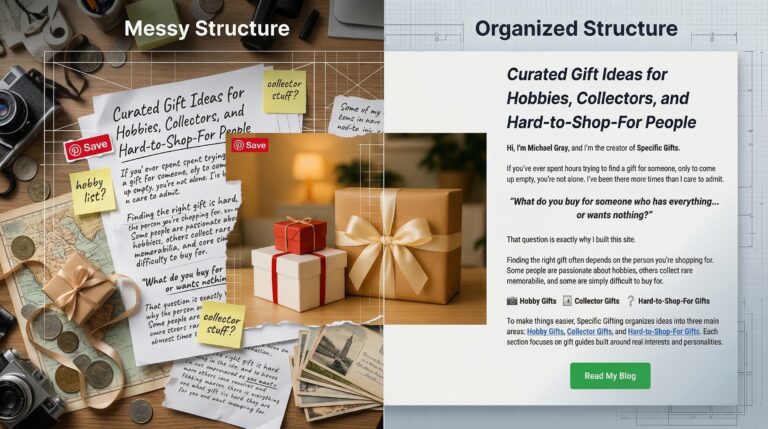

Turn Messy Ideas Into Drafts, Summaries, and Plans

If you’ve ever stared at a blank page and felt your brain go flat, AI can help. It’s strong at turning rough thoughts into structure.

Here are a few places it usually delivers:

- Outlines and first drafts: Blog posts, scripts, landing page sections, product comparisons.

- Rewrites for clarity: Tighten a rambling paragraph, simplify wording, adjust tone.

- Summaries: Turn a long article, meeting notes, or a transcript into key points.

- Variations: Email subject lines, ad angles, meta descriptions, headline options.

If you want better outputs, give better inputs. A quick prompt upgrade that works almost every time is: include your audience, your goal, and your constraints. For example, “Write this for a beginner affiliate marketer in the US, keep sentences under 20 words, avoid hype, and give a practical next step.”

And if you’re building content for affiliate marketing, it helps to see how other marketers organize their AI stack. This roundup on Wealthy Affiliate is a solid reference: time-saving AI tools for affiliate marketers.

Spot Patterns in Data and Text Faster Than a Person Can

AI also does well when you’re trying to find repeating themes. Not “deep truth,” just patterns you can act on.

In plain terms, AI can help you:

- Classify and tag text (support tickets, comments, reviews).

- Pull key fields from messy inputs (names, dates, order numbers, product mentions).

- Group similar feedback (what people complain about most, what they love most).

- Summarize sentiment (positive, negative, mixed), then explain why it thinks so.

This is where AI feels like it has “insight,” because it can scan 500 reviews in seconds. Still, you have to sanity check. AI can miss sarcasm, misread context, and overconfidently label edge cases.

A practical business use is affiliate program research. You can ask AI to list possible partners, then you verify terms on the vendor site. If you want a real example of that workflow, this WA post shows a simple process: Researching Affiliate Programs with AI.

AI is a fast microscope, not a judge. It can show you patterns, but you decide what they mean.

What AI Cannot Do Reliably, Even in 2026

Now for the truth sandwich: AI is helpful, and you can get real work done with it. Yet some limits are built in. They don’t go away just because the model got bigger.

The main issues show up in three places: accuracy, responsibility, and real-world judgment. If you keep those in mind, you’ll avoid most of the painful mistakes.

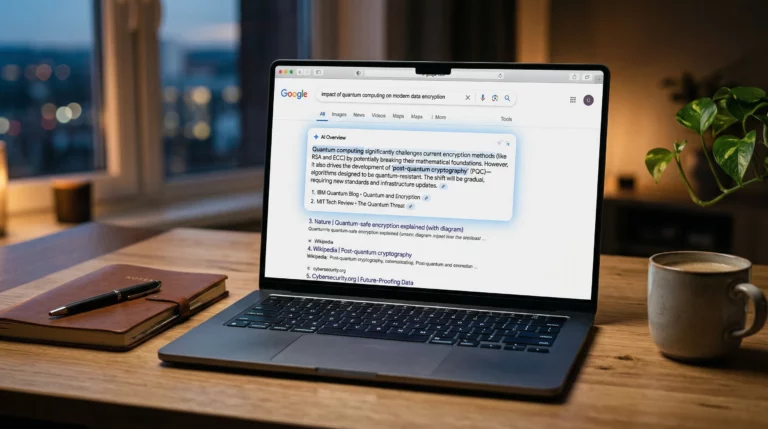

Guarantee the Truth, It Can Sound Confident and Still Be Wrong

AI can hallucinate, which is a polite word for “make stuff up.” It fills gaps with likely-sounding text. That’s not a rare glitch. It’s part of how generative AI works.

Common examples you can picture:

- It invents citations that look real, but don’t exist.

- It gives wrong dates or mixes up people with similar names.

- It lists product features that aren’t in the product.

- It claims a policy exists, then you go to the website and it’s not there.

The tone makes it worse. AI often writes like a polished expert, even when it’s guessing. If you want a straightforward explanation of hallucinations and bias, this primer is useful: When AI gets it wrong.

Here’s a rule I use because it’s easy to remember: if it matters, verify it. Money, health, legal issues, safety claims, and anything you’ll publish under your name should have sources you can check.

Take Responsibility for Outcomes, There Is No Real Accountability

AI can’t “own” a decision. It can’t be accountable, show up in court, or explain itself like a person under pressure. When something goes sideways, the responsibility lands on the human or the business, not the tool.

This matters most in high-stakes areas:

Hiring decisions, loan approvals, medical guidance, legal choices, and financial advice. Even if AI helped you decide, you’re still the one who pushed “yes.”

In the US, there still isn’t one single federal AI law that covers everything (as of March 2026). Instead, oversight shows up through state laws and agency enforcement. Colorado’s AI Act (effective mid-2026) is one example that pushes for risk management and human oversight in high-risk uses. Agencies like the EEOC, FTC, and CFPB also expect you to prevent discrimination and deception when AI touches hiring or consumer decisions.

If you’re building an online business, there’s also a practical ethics layer. Using AI isn’t the issue. Trying to dodge responsibility is. This WA discussion frames it well: AI ethics for entrepreneurs.

The Hidden Problems People Run Into When They Try to Use AI at Work

Most AI failures in the real world don’t happen because someone typed a “bad prompt.” They happen because normal teams are busy, systems are messy, and nobody wrote down the rules.

The risk isn’t always dramatic, either. Sometimes it’s just quiet damage, like sending the wrong email, publishing shaky claims, or letting private info slip into the wrong place.

Privacy, Security, and “Oops, We Pasted Sensitive Data” Moments

This is the one that sneaks up on people. Someone pastes customer data into a chatbot to “clean it up.” Or they drop internal numbers into a prompt to get a summary. It feels harmless in the moment.

Data leaks happen through:

Shared accounts, unclear tool settings, prompt logs, saved chat history, weak access control, and employees using “shadow AI” tools that IT never approved. On top of that, attackers now use AI to move faster, which raises the stakes for everyone. IBM’s 2026 reporting is a good reminder that AI helps defenders and attackers: IBM 2026 X-Force Threat Index.

Safe habits that don’t require a big budget:

Remove personal data before you paste anything, use approved tools, avoid uploading confidential files, and add a human review step for anything customer-facing. Also, keep a simple policy. If your team can’t explain the policy in one minute, it won’t get followed.

Bad Inputs Create Bad Outputs, and Edge Cases Break the Spell

AI looks amazing in a demo because demos are clean. Real work is not clean.

Two common issues show up fast:

Outdated info and missing context. AI can also struggle when situations get rare or sensitive (edge cases). That’s why it can perform well 90 percent of the time, then cause a problem on the 10 percent that actually matters.

Here’s a simple example: a support team uses AI to draft replies. Most of the responses are fine. Then a customer mentions a refund dispute, a medical situation, or a safety complaint. The AI responds with a cheerful template, and the business looks careless.

So yes, use AI to speed up support drafts. Just don’t let it run unattended. In practice, the “human in the loop” part isn’t optional. It’s the whole point.

A Simple Way to Decide When to Use AI, and When to Avoid It

If you want a simple filter, stop asking “Can AI do this?” and start asking two questions:

- What’s the risk if it’s wrong?

- How easy is it to check?

That’s it. Those two questions will keep you out of trouble.

The Green, Yellow, Red Test for Everyday AI Tasks

Here’s the quick framework, in table form, so you can screenshot it.

| Light | Best For | Why It Fits | Your Rule |

|---|---|---|---|

| Green | Drafts, summaries, idea lists, simple rewrites | Low risk, easy to verify | Edit for your voice and accuracy |

| Yellow | How-to advice, comparisons, numbers, claims | Medium risk, needs sources | Require sources, then double-check |

| Red | Medical, legal, HR decisions, private customer data | High risk, hard to verify | Don’t use AI as the decision-maker |

The takeaway is plain: if you can’t verify it, don’t publish it, and don’t act on it.

How to Get Value Without Getting Burned: Prompts, Proof, and a Final Human Pass

You don’t need a perfect system. You need a repeatable one. Here’s a tight checklist that works for content and business tasks:

- Define the goal: What will “done” look like?

- Add constraints: Audience, tone, length, and what to avoid.

- Ask for assumptions: Make the model list what it’s assuming.

- Request sources (for yellow-light work): Then verify those sources yourself.

- Cross-check key claims: Dates, prices, statistics, and policies.

- Do a bias and tone check: Watch for harsh language or stereotypes.

- Put your name on it: One person stays accountable for the final output.

If you’re choosing between different AI models for content work, you’ll notice they handle tone and context differently. This WA comparison can help you think through the trade-offs: comparing top AI models for affiliate marketers.

Conclusion

AI can save time and boost output, and that’s real. Still, it can’t guarantee truth, take responsibility, or understand consequences the way people do. Your advantage in 2026 isn’t having AI, it’s having judgment.

Pick one green-light task to try this week (like outlining a post or summarizing notes). Then commit to one rule you won’t break, verification before publishing. That habit sounds basic, but it keeps you in control, which is the whole point.